AI Glasses with “Lean LLM” : A Co-Creation Strategy concept (N=1 and R=N) Trust & Innovation

On May 21, at the Google I/O Developer Conference, Google unveiled a stunning concept: the “Android XR" AI glasses. With a sleek black frame, integrated screen, camera, and messaging functions, the most compelling feature was the integration of Google AI Assistant—capable of understanding commands, recognizing objects, and even maintaining short-term memory. Previously, Meta introduced its own version, the Ray-Ban Meta Smart Glasses, signaling that AI glasses are rapidly gaining strategic importance.

This trend reveals not only a technological direction but also a fundamental shift in business strategy. This also reflects the trend I discussed in a previous article about software companies transforming into hardware-software dual-market players( two-sided platforms) the development of AI glasses represents a push toward vertical integration. These companies are aiming to control both software and hardware layers to maximize commercial value.

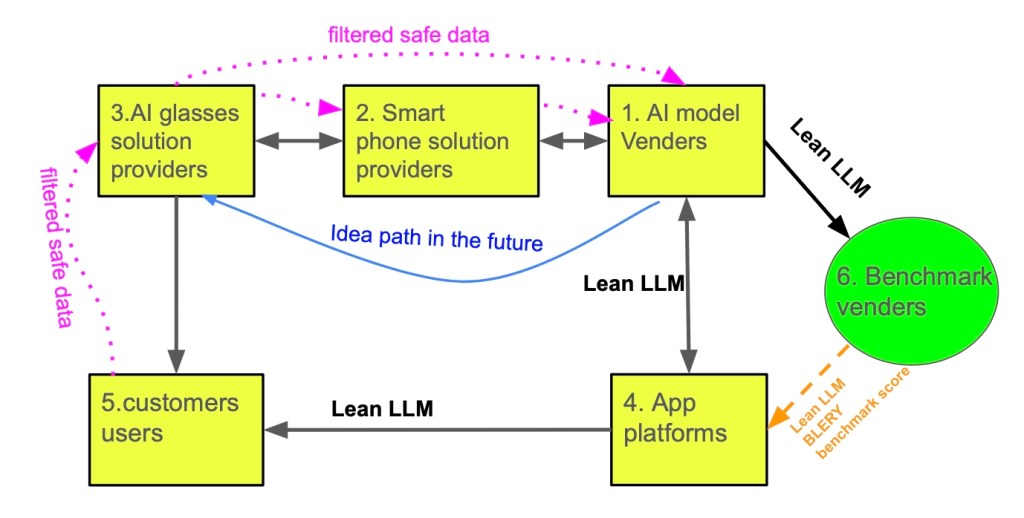

Undoubtedly signals that AI glasses will be a major focus in the tech industry in the coming years. Many companies will invest in R&D, attempting to leverage a “one-stop shop" strategy that integrates hardware and software to maximize their business interests. However, by considering the “co-creation concept" (Driving Co-created Value Through Global Networks) proposed by C.K. Prahalad and M.S. Krishnan in “The New Age of Innovation," which emphasizes the importance of N=1 (individual consumer needs) and R=N (network alliances), the development of AI glasses can move towards a future that’s more open, flexible, and closely aligned with consumer demands.

1. Edge AI Challenges and the Core of Privacy: Applying the BLERP Measurement Tool

AI glasses are a classic example of an “edge AI" application, where the ability to perform computations and make decisions directly on the device is crucial. When using Jeff Bier’s BLERP (Bandwidth, Latency, Economics, Reliability, Privacy) measurement tool to evaluate the widespread adoption of smart glasses, I consider Privacy the most critical indicator. While we enjoy the convenience brought by artificial intelligence, it’s even more urgent to effectively manage these benefits simultaneously. The importance of information security far outweighs our current need for innovative development.

Imagine AI glasses becoming an “Agent" that can “see, hear, and speak" everything we encounter daily. The amount of personal privacy data it collects will be enormous. If this data is transmitted to remote servers for processing, the potential risk of privacy breaches is concerning. How can consumers have the “Confidence" to trust an AI company with their most private information? This is a huge challenge. After all, breakthroughs in AI technology, especially the optimization of large language models, indeed require vast amounts of raw data for training. This creates a dilemma between privacy protection and technological advancement. Therefore, ensuring user privacy while meeting the needs for AI model optimization is key to the widespread acceptance of smart glasses.

2. The Smartphone as an Intermediary for Edge Computing and Privacy Protection

To address privacy concerns, my proposed vision is to position the smartphone as the “intermediary" computing center for smart glasses. In this ideal architecture:

- Smartphone as the Inference Center: Given the limited computing power and memory of AI glasses, we can designate the smartphone as an AI inference-capable device. The smartphone can utilize concepts like RAG (Retrieval-Augmented Generation) and vector databases to store raw, sensitive personal data (such as images, voice, location, etc.) directly on the user’s own phone.

- Localized AI Inference: Smart glasses can leverage the smartphone’s computing power to perform AI inference and agent services using data stored on the phone. This means that most sensitive data processing will be done locally on the user’s phone, eliminating the need to upload it to cloud servers. This fundamentally reduces the risk of privacy breaches, giving users greater control over their data and increasing their trust in AI glasses applications.

3. “Lean" Large Language Models and the Co-Creation Ecosystem

In this model, the roles of AI companies and large language model providers will shift. They will focus more on:

- Parameter Adjustment and Optimization of Large Language Models: AI companies will be responsible for continuously improving and optimizing general large language models, giving them more powerful understanding, generation, and learning capabilities.

- Generating “Lean LLM Models": Based on these optimized large language models, AI companies can develop “lean LLM models" specifically optimized for mobile phones. The term “Lean" implies that these models will be streamlined and practical, capable of running efficiently on the limited computing resources of a smartphone, and focused on providing the specific AI functionalities required by AI glasses. These lean models will allow users to confidently use AI functions locally without privacy concerns.

This model also perfectly aligns with Prahalad’s co-creation philosophy and fosters an open innovation ecosystem:

- Open Language Model Platform: AI companies will provide their optimized language models, while smart glasses companies can freely choose what they believe are the best language models from an open “App platform" or “model marketplace." They can then download these models to users’ phones to integrate with smart glasses. This openness breaks the monopoly of a single AI company over language models, promoting market competition and innovation.

- Independent Third-Party Evaluation and Benchmarking: In this co-creation ecosystem, an independent, trustworthy company needs to play the role of “benchmarking." This company can evaluate and compare different language models and smart glass applications based on the five BLERP indicators, providing the evaluation results to consumers for reference. This will help consumers make more informed choices while encouraging AI and AI glasses companies to continuously improve product quality and privacy protection.

- Realizing N=1 and R=N: In this co-creation and open innovation environment, the AI glasses ecosystem will develop positively and gain rapid popularity. Users can choose different AI glass hardware, mobile-side AI models, and application services based on their individual needs (N=1). At the same time, various companies will form close network alliances (R=N), including OEMs/ODMs (Original Equipment/Design Manufacturers), IHVs (Independent Hardware Vendors), ISVs (Independent Software Vendors), AI companies, and so on. They will collectively share the fruits of market growth rather than being monopolized by a single company.

4. Challenges and Opportunities

With the rapid advancement of Generative AI and large language model (LLM) technologies, wearable AI devices are gradually becoming a market focus. AI glasses are regarded as the next key terminal, capable of naturally integrating voice, vision, sensing, and real-time interaction functions to create an AI experience that aligns more closely with human cognition and daily life scenarios. However, from technical, design, and market application perspectives, AI glasses still face numerous challenges that need to be further overcome.

4-1. The Evolution of Lean Language Models (Lean LLM) and Inference Capability

Currently, mobile devices are already capable of running compressed and optimized small-scale language models (such as Qwen1.5-M, Gemma 2 9B, Phi-3 Mini), providing offline real-time voice and text generation capabilities. However, in order to implement more advanced AI inference on end devices like AI glasses—such as multimodal understanding (voice + vision), long-term memory, real-time interaction, and low-latency, high-reliability responses—further technical optimization is required, especially toward the direction of truly streamlined, energy-efficient, and fast-responding Lean LLM models.

The design of Lean LLMs must simultaneously consider five core indicators—Bandwidth, Latency, Economics, Reliability, and Privacy—namely the BLERP model (Bier, 2021). AI glasses will need to achieve acceptable language understanding and generation within extremely limited computational resources, which poses significant challenges to model compression techniques (such as distillation and quantization), hardware architecture (such as NPUs), and software stacks.

4-2. Power Consumption and Heat Dissipation: Engineering Constraints of Wearable AI Devices

Even if Lean LLMs can be downloaded and executed directly on AI glasses in the future, one of the biggest current challenges remains energy and heat management. Inference of language models, even after extensive compression, still consumes significant computational resources, resulting in elevated temperatures on the chip and device surface. Since AI glasses are worn close to sensitive areas such as the face, eyes, and ears, temperatures exceeding the human tolerance threshold can affect user comfort and health.

In addition, to meet the power demands of computation, battery capacity will inevitably need to increase, thereby raising the overall weight of the device. If the weight of AI glasses exceeds that of regular glasses by more than twice, it will significantly impact wearing stability and long-term usability, especially in everyday scenarios such as sports, outdoor activities, and business settings.

4-3. Technical Vision and Opportunities: From Companion Devices to Fully Autonomous Operation

A more feasible approach at present is to use AI glasses as front-end sensing and interaction interfaces, while delegating AI inference computation tasks to paired smartphones or other wearable devices (such as earphones). This allows the glasses to remain lightweight and wearable while leveraging the powerful computing capabilities of smartphones to perform localized inference and edge AI services—for example, using RAG (Retrieval-Augmented Generation) to search personal data locally and generate language responses.

As low-power neural processing units (NPUs), solid-state heat dissipation materials, and new battery technologies gradually mature, the vision of “glasses as agents” is no longer far-fetched. At that point, every pair of AI glasses will be able to run its own dedicated language model, equipped with long-term memory, contextual understanding, and personalized interaction capabilities—truly becoming a daily cognitive assistant for humans.

Conclusion

The future of AI glasses should be one of diversified innovation—a collaborative and an open ecosystem driven by personalized consumer needs and co-created through multi-party collaboration. By shifting the focus of sensitive data processing to the smartphone and promoting the development of “Lean LLM models," we can effectively alleviate privacy concerns. Simultaneously, establishing an open language model platform and independent benchmarking organizations will foster healthy market competition and ultimately realize the **"co-created value"**envisioned by C.K. Prahalad and M.S. Krishnan, making AI glasses a truly trustworthy and widely adopted personalized AI assistant for the general public.

參考資料:

- Google I/O 開發者大會 (2025)

- Meta Ray-Ban Meta Smart Glasses 產品資訊

- Jeff Bier (Edge AI and Vision Alliance), BLEPR Framework

- C.K. Prahalad & M.S. Krishnan, “The New Age of Innovation: Driving Co-Created Value Through Global Networks", McGraw Hill (2008)

- RAG (Retrieval-Augmented Generation) 技術應用文獻

AI眼鏡與精實大型語言模型:共創策略 (N=1, R=N),實現信任與創新

近年來,AI眼鏡作為次世代終端裝置逐漸受到各大科技公司重視。2025年5月21日,Google於開發者大會I/O中,發表搭載Android XR的新型智慧眼鏡,整合顯示、拍照、訊息傳遞與AI助理等功能,並具備畫面辨識與短期記憶能力。在此之前,Meta也已推出Ray-Ban Meta智慧眼鏡,顯示AI眼鏡市場正在快速擴張。

本文欲探討此類裝置的商業策略轉向,其不僅是單一硬體產品的推出,更是軟硬體整合並結合雙邊市場架構的代表。這與OpenAI、微軟等原本以軟體為主的公司逐漸發展自有裝置策略相呼應。

AI智慧眼鏡將在未來幾年成為科技產業的焦點。許多公司將投入研發,並試圖藉由軟硬體整合的「一條龍」策略來掌握最大商業利益。然而,若從C.K. Prahalad和M.S. Krishnan在《創新法則》中所提出的「共創理念」(Driving Co-created Value Through Global Networks)來思考,即強調N=1(消費者個性化需求)與R=N(網路結盟關係)的重要性,AI智慧眼鏡的發展將能走向一個更開放、更具彈性且更貼近消費者需求的未來。

1. 邊緣AI的挑戰與隱私核心:BLERP衡量工具的應用

智慧眼鏡作為一個典型的「邊緣AI」應用,其在設備端直接進行運算和決策的能力至關重要。引用Jeff Bier提出的BLERP(Bandwidth, Latency, Economics, Reliability, Privacy)衡量工具來評估智慧眼鏡的普及性,我將「隱私」(Privacy)視為最關鍵的指標,在我們享受人工智慧帶來便利的同時我們更迫切的是要同時達到有效地管理他們,資訊安全的重要性遠超越我們當前需要創新發展的所需.

想像一下,當智慧眼鏡成為一個能夠「看見、聽見、說出」我們日常所見所聞的「Agent」時,它所擷取到的個人隱私數據量將是巨大數字。這些數據若傳輸到遠端伺服器進行處理,其潛在的隱私洩露風險令人擔憂。消費者如何能夠「Confidence」(信心)去信任一家AI公司,將其最私密的資訊交由對方處理?這是一個巨大的挑戰。畢竟,AI技術的突破性進展,特別是大語言模型的優化,確實需要大量的原始資料進行訓練。這在隱私保護和技術進步之間形成了兩難。因此,如何在滿足AI模型優化需求的同時,確保用戶隱私,是智慧眼鏡能否被廣泛接受的關鍵。

2. 手機作為中介的邊緣運算與隱私保護

為了解決隱私問題,我思考設想是:將手機作為智慧眼鏡的「中介」運算中心。在這種理想的架構下:

- 手機裝置作為推算中心: 由於智慧眼鏡的運算能力和記憶體有限,我們可將手機定位為具備AI推算能力的裝置。手機可應用RAG(Retrieval-Augmented Generation), 向量數據庫等概念,將原始的、敏感的個人資料(如影像、語音、位置等)保存在用戶自己的手機端。

- 本地化的AI推算: 智慧眼鏡可以透過手機端的運算能力,利用儲存在手機內的資料進行AI推算和Agent服務。這意味著大部分的敏感數據處理將在用戶手機本地完成,無需上傳至雲端伺服器,從根本上降低了隱私洩露的風險。用戶對自己的數據擁有更高的控制權,從而提升對智慧眼鏡應用的信任度。

3. 大語言模型的「精實」化與共創生態圈

在這種模式下,AI公司和大語言模型供應商的角色將有所轉變。他們將更專注於:

- 大語言模型的參數調整與優化: AI公司負責不斷改進和優化通用的大語言模型,使其具備更強大的理解、生成和學習能力。

- 生成「精實AI模型」(Lean LLM): 基於這些優化後的大語言模型,AI公司可以開發出專為手機端優化的「精實AI模型」。之所以稱之為「精實」(Lean),是因為這些模型將是精簡而實用的,能夠在手機有限的運算資源下高效運行,並專注於提供智慧眼鏡所需的特定AI功能。這種精實化的模型,能讓使用者更有信心地在本地使用AI功能,而無需擔心隱私問題。

這種模式也完美契合了Prahalad的共創理念,並推動一個開放的創新生態圈:

- 開放的語言模型平台: AI公司提供其優化後的語言模型,而智慧眼鏡公司則可在一個開放的「App平台」或「模型市集」中,自由挑選他們認為最適合的語言模型,並下載到用戶手機內,進而與智慧眼鏡結合。這種開放性打破了單一AI公司壟斷語言模型的局面,促進了市場的競爭和創新。

- 獨立的第三方評估與基準測試: 在這個共創的生態圈中,需要有一個獨立、可信賴的公司來扮演「基準測試」(Benchmark)的角色。該公司可依據BLERP五個指標對不同的語言模型和智慧眼鏡應用進行評估和比較,並將評比結果提供給消費者參考。這將幫助消費者做出更明智的選擇,同時也促使AI公司和智慧眼鏡公司不斷提升產品品質和隱私保護水準。

- N=1與R=N的實現: 在此共創與開放式創新的環境中,智慧眼鏡的生態將會朝向良性的發展,並更快速地普及。用戶可以根據自身的個性化需求(N=1)選擇不同的智慧眼鏡硬體、手機端AI模型和應用服務。同時,各種公司之間將形成緊密的網路結盟關係(R=N),包括OEM/ODM(原始設備/設計製造商)、IHV(獨立硬體供應商)、IBV(獨立軟體供應商)、AI公司等等,共同分享市場成長的成果,而不是被單一公司所壟斷。

4. 挑戰與機會

隨著生成式人工智慧(Generative AI)與大型語言模型(LLM)技術快速進展,穿戴式AI裝置逐漸成為市場焦點。智慧眼鏡(AI glasses)被視為下一個關鍵終端,能夠自然地結合語音、視覺、感測與即時互動功能,打造更貼近人類認知與生活場景的AI體驗。然而,從技術、設計到市場應用層面,智慧眼鏡仍面臨諸多挑戰,有待進一步克服。

4-1. 精實化語言模型(Lean LLM)與推理能力的演進

目前已有手機端能夠運行經壓縮與優化的小語言模型(如Qwen1.5-M、Gemma 2 9B、Phi-3 Mini),提供離線即時的語音與文字生成能力。然而,若要在智慧眼鏡這類終端上實現更進階的AI推理,如多模態理解(語音 + 視覺)、長期記憶、即時互動,以及低延遲高可靠性的回應,則仍需更進一步的技術優化,特別是朝向真正精簡、節能、快速回應的 Lean LLM 模型方向發展。

Lean LLM 的設計必須同時兼顧五項核心指標——頻寬(Bandwidth)、延遲(Latency)、經濟性(Economics)、可靠性(Reliability)、與隱私(Privacy),即BLERP模型(Bier, 2021)。智慧眼鏡將需要在極有限的運算資源下,做到可接受的語言理解與生成,這對模型的壓縮技術(如Distillation、Quantization)、硬體架構(如NPU)與軟體堆疊都是重大挑戰。

4-2. 功耗與散熱:穿戴式AI裝置的工程限制

即使未來能將Lean LLM下載至智慧眼鏡本體執行模型推理,但現階段最大難題之一仍是能源與熱管理問題。語言模型推理即使經過極度壓縮,仍須消耗顯著運算資源,導致晶片與裝置表面溫度升高。智慧眼鏡貼近人臉、眼睛與耳朵等敏感區域,若溫度超過人體可耐受範圍,將影響使用者的舒適度與健康。

此外,為因應運算所需的電力,電池容量勢必提升,進而增加裝置的整體重量。若智慧眼鏡重量超過一般眼鏡2倍以上,將顯著影響配戴的穩定性與長時間使用的接受度,尤其是在運動、戶外、商務場合等日常場景中。

4-3. 技術願景與機會:從中介式裝置到完全獨立運作

目前較為可行的方式,是採用智慧眼鏡作為前端感知與互動界面,而將AI推理運算工作交由配對手機或其他穿戴設備(如耳機)處理。如此可保留眼鏡的輕薄與穿戴性,同時利用手機端的強大算力進行私有化推理與邊緣AI服務,例如應用RAG(Retrieval-Augmented Generation)在地端搜尋個人資料,進行語言生成與回應。

隨著低功耗神經處理單元(NPU)、固態散熱材質與新型電池技術逐漸成熟,未來實現「眼鏡即Agent」的願景並非遙不可及。屆時,每副AI智慧眼鏡將能運行專屬的語言模型,具備長期記憶、情境理解與個人化互動能力,真正成為人類的日常認知輔助工具。

結論

AI智慧眼鏡的未來應是多元化的創新發展,是一個由消費者個性化需求驅動、由多方協作共創的開放生態系統。透過將敏感數據的處理重心移至手機端或未來的直接運行在地模型的AI智慧眼鏡,並推動「精實AI模型」的發展,我們可以有效緩解隱私疑慮。同時,建立一個開放的語言模型平台和獨立的基準測試機構,將促進健康的市場競爭,並最終實現C.K. Prahalad和M.S. Krishnan所描繪的「共創價值」,讓智慧眼鏡真正成為普羅大眾信任並廣泛採用的個性化智慧助手。

發表留言