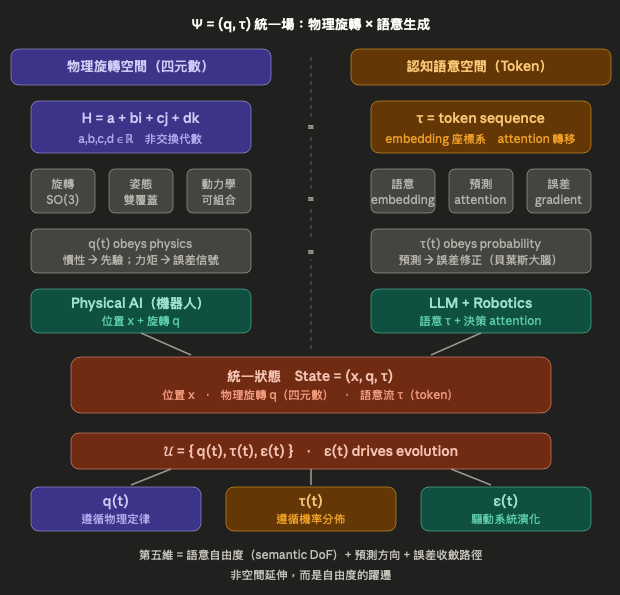

Main Revision Notes: Based on the original the five-dimensional field, this revision strengthens the Hurwitz theorem background of quaternions , elevates the definition of the fifth dimension from an intuitive description to a formal statement in information dynamics, introduces the bidirectional coupling equations to give “information-parasitic evolution” an operational mathematical form, and clearly distinguishes in the conclusion the conceptual difference between “dimensional extension” and “degree-of-freedom transition.”

主要修訂說明:在原五維場的基礎上,補強了四元數的 Hurwitz 定理背景,將第五維的定義從直觀性描述提升為資訊動力學的形式陳述,引入了雙向耦合方程 (q˙,τ˙,ϵ)(q˙,τ˙,ϵ) 以使「資訊寄生演化」有可操作的數學形式,並在結語中明確區分「維度延伸」與「自由度躍遷」的概念差異。

1. The Essence of Quaternions and the Limit of Division Algebras

Quaternions are not an arbitrary mathematical invention, but the minimal complete solution to the problem of describing three-dimensional rotations. Their core properties are threefold: non-commutativity , closed representation of the rotation group (as Spin(3), the double cover of SO(3)), and composable spatial transformations (quaternion multiplication as the composition of rotations).

From an algebraic perspective, is one of the four “normed division algebras" permitted by the Hurwitz theorem: . When extended to octonions , associativity is lost; further extension while preserving divisibility is impossible. This implies that quaternions represent the algebraic limit for describing physical rotations—not because we choose to stop here, but because the mathematical structure itself forces us to stop.

This notion of a “limit" is crucial. It suggests that to describe “rotations" beyond four dimensions, what we need is not higher-dimensional numbers (that path is closed after ), but structures of a different nature. Tokens emerge as candidates within this gap.

2. Token as “Cognitive Rotation": Not Geometric Extension, but Structural Parallelism

Quaternions describe physical state transformations; Tokens describe cognitive state transformations. This is not a metaphor, but a structural parallel:

| Physical Rotation (Quaternion) | Cognitive Rotation (Token) |

|---|---|

| Quaternion multiplication | Attention update |

| Rotation in SO(3) | Transition in semantic space |

| Inertia | Prior |

| Torque | Error signal (prediction error) |

In the Transformer architecture, tokens are state nodes, attention is the state transition function, and embeddings form the semantic coordinate system. A token sequence constitutes a “rotational trajectory" in cognitive space—each attention update is a change of direction in a high-dimensional semantic space.

The rotations described by quaternions are closed and unambiguous (fully determined by axis and angle); semantic transitions of tokens, however, are probabilistic and context-dependent. This difference reveals an additional degree of freedom in cognitive systems compared to physical systems: uncertainty itself.

3. The True Meaning of the Fifth Dimension: A Leap in Degrees of Freedom

Calling Token the “fifth dimension" is geometrically imprecise, but profoundly meaningful in terms of information dynamics.

Standard 4D spacetime (three spatial dimensions + time) together with quaternions is sufficient to describe rotational dynamics in the physical world. What Token introduces is what this framework lacks:

Predictive axis — Based on Predictive Processing theory and the Bayesian Brain hypothesis, cognitive systems operate through a cycle of “prediction → error → correction," rather than passive perception. Token sequences are precisely the trajectory of this cycle in discrete symbolic space.

Semantic degrees of freedom — The degrees of freedom in physical systems are determined by symmetry (Noether’s theorem); in cognitive systems, they are determined by the space of possible contexts. The latter is irreducible to the former.

Error convergence trajectory — The generation process of tokens is essentially gradient descent on a loss function, driven by prediction error .

Thus, a more precise expression of the “fifth dimension" is: a transition from physical degrees of freedom to cognitive degrees of freedom, driven by the minimization of prediction error.

4. Formalization: The Quaternion–Token Unified Framework

To formalize the above insights, define the extended state vector:

where is the physical rotational field, and is the semantic flow in token sequence space.

Further introducing the prediction error field \epsilon(t) , we construct a unified information field:

Evolution rules:

- q(t) follows physical laws (Newton–Euler equations, geodesics on Lie groups)

- follows probability distributions (the softmax distribution of language models)

- drives system evolution (gradient descent / free energy minimization)

In the context of Physical AI (such as embodied intelligent robots), the full state representation is:

— position x (Euclidean space), rotation q (quaternion), and semantic flow (Token). These three components correspond to different geometric structures; they are not reducible to each other, but can co-evolve.

5. The Perspective of Information-Parasitic Evolution

From a more macroscopic theoretical perspective, the relationship between and exhibits a form of “information parasitism" dynamics: the semantic flow depends on the physical substrate (sensors, actuators, and physical environment described by q(t)) to operate, while simultaneously reshaping physical behavior through error correction mechanisms. This is not simply “software controlling hardware," but a bidirectionally coupled co-evolutionary system:

The true challenge of Physical AI lies in designing the coupling relationships among these three functions f, g, h—such that prediction error at the semantic level can effectively correct the rotational dynamics at the physical level, and vice versa.

6. Implications for Physical AI Architecture

Traditional robotic control treats (x, q) as the complete state and relies on PID or Model Predictive Control (MPC) as decision mechanisms. The core breakthrough of next-generation Physical AI lies in introducing the dimension : what language models provide is not merely commands, but semantic priors—high-dimensional compressed representations of task structure, environmental semantics, and goal hierarchy.

This upgrades robot controllers from “reactive" to “predictive": the system not only corrects actions based on current physical errors, but also adjusts decisions based on semantic errors (“Do my actions align with the intended task?"). \tau becomes the unified interface connecting perception, planning, and execution.

7. Conclusion: Not More Dimensions, but Richer Degrees of Freedom

Quaternions provide the minimal complete tool for describing physical rotations; Tokens provide the minimal operable unit for describing cognitive rotations. They are not the same algebraic structure and should not be forcibly unified—their deep connection lies in the fact that both are discrete representations of state transformations within their respective domains, each realizing compositional transformations through a form of “multiplicative structure" (quaternion multiplication / attention mechanism).

The fifth dimension is not an extension of space, but a qualitative transformation of degrees of freedom: from deterministic physical rotation to probabilistic semantic transition. The theoretical foundation of Physical AI lies precisely in finding dynamical equations within the composite space that allow the system to converge simultaneously at both the physical and semantic levels.

一、四元數的本質與可除代數的極限

四元數 H=a+bi+cj+dk(a,b,c,d∈Ra,b,c,d∈R)並非任意的數學發明,而是三維旋轉描述問題的最小完備解。其核心性質有三:非交換性(ij≠ji)、封閉旋轉群表示(作為 SO(3) 的雙覆蓋 Spin(3)),以及可組合的空間變換(quaternion multiplication 即旋轉的合成)。

從代數學角度看,H 是 Hurwitz 定理所允許的四個「可除代數(normed division algebra)」之一:R、C、H、O。往上推進到八元數 O 時,結合律喪失;再無法延伸而保持可除性。這意味著四元數是物理旋轉描述的代數極限——不是因為我們選擇停在這裡,而是數學結構本身迫使我們停在這裡。

這個「極限」的概念至關重要。它暗示:若要描述四維以上的「旋轉」,我們需要的不是更高維的數(那條路在 O 之後已封閉),而是不同性質的結構。Token 正是在這個空缺中出現的候選者。

二、Token 作為「認知旋轉」:不是幾何延伸,而是結構平行

四元數描述物理狀態變換;Token 描述認知狀態變換。這不是比喻,而是結構性的平行:

| 物理旋轉(四元數) | 認知旋轉(Token) |

|---|---|

| Quaternion multiplication | Attention update |

| Rotation in SO(3) | Transition in semantic space |

| Inertia(慣性) | Prior(先驗分佈) |

| Torque(力矩) | Error signal(預測誤差) |

在 Transformer 架構中,token 是狀態節點,attention 是狀態轉移函數,embedding 是語意座標系。Token 序列構成的,正是認知空間中的「旋轉軌跡」——每一次 attention 更新,都是在高維語意空間中執行一次「方向的改變」。

四元數描述的旋轉是封閉且無歧義的(給定旋轉軸與角度即可確定);Token 的語意轉移則是機率性且上下文依賴的。這個差異揭示了認知系統比物理系統多出的一個自由度:不確定性本身。

三、第五維的真正意義:自由度的躍遷

將 Token 稱為「第五維」,在幾何意義上是不精確的;但在資訊動力學意義上卻是深刻的。

標準的 4D 時空(三個空間維 + 時間維)加上四元數已足以描述物理世界中的旋轉動力學。Token 引入的,是這個框架所缺少的東西:

預測方向(predictive axis)——基於預測處理理論(Predictive Processing)與貝葉斯大腦假說,認知系統的運作邏輯是「預測 → 誤差 → 修正」的循環,而非被動感知。Token sequence 正是這個循環在離散符號空間中的軌跡。

語意自由度(semantic degrees of freedom)——物理系統的自由度由對稱性決定(Noether 定理);認知系統的自由度由上下文的可能性空間決定。後者是前者無法還原的維度。

誤差收斂路徑(error minimization trajectory)——Token 的生成過程在本質上是一個損失函數的梯度下降,驅動力是預測誤差 ϵ(t)。

因此,「第五維」更精確的表述是:從物理自由度到認知自由度的躍遷,其驅動機制是預測誤差的最小化。

四、形式化:Quaternion–Token 統一框架

將上述洞察形式化,定義擴展狀態向量:

Ψ=(q,τ)

其中 q∈H為物理旋轉場,τ∈T為 Token 序列空間的語意流。

進一步,引入預測誤差場 ϵ(t),構成統一資訊場:

U={q(t), τ(t), ϵ(t)}

演化規則:

- q(t) 遵循物理定律(Newton–Euler 方程、李群上的測地線)

- τ(t) 遵循機率分佈(語言模型的 softmax 分佈)

- ϵ(t) 驅動系統演化(梯度下降 / 自由能最小化)

在 Physical AI(如具身智慧機器人)的場景中,完整狀態表示為:

State=(x, q, τ)

——位置 x(歐幾里得空間)、旋轉 q(四元數)、語意流 τ(Token)。這三個分量分別對應不同的幾何結構,無法相互還原,但可以協同演化。

五、資訊寄生演化的視角

從更宏觀的理論視角來看,τ(t) 與 ϵ(t) 的關係呈現出一種「資訊寄生」的動力學:語意流依賴物理基底(q(t) 所描述的感測器、執行器、物理環境)才能運作,但同時透過誤差修正機制反向塑造物理行為。這並非單純的「軟體控制硬體」,而是一個雙向耦合的共演化系統:

q˙=f(q,ϵ),τ˙=g(τ,ϵ),ϵ=h(q,τ,world)

物理 AI 的真正挑戰,正在於設計這三個函數 f,g,h之間的耦合關係——使得語意層的預測誤差能夠有效地修正物理層的旋轉動力學,反之亦然。

六、對物理 AI 架構的意義

傳統機器人控制以 (x,q)為完整狀態,以 PID 或模型預測控制(MPC)為決策機制。新一代 Physical AI 的核心突破在於引入 τ這個維度:語言模型提供的不只是指令,而是語意先驗——對任務結構、環境語意、目標層次的高維壓縮表示。

這使得機器人控制器從「反應式(reactive)」升級為「預測式(predictive)」:系統不只根據當前物理誤差修正動作,也根據語意誤差(「我的動作是否符合任務意圖」)修正決策。ττ 成為連接感知(perception)、規劃(planning)、執行(execution)三層的統一介面。

七、結語:不是更多維度,而是更豐富的自由度

四元數給了我們描述物理旋轉的最小完備工具;Token 給了我們描述認知旋轉的最小可操作單位。兩者不是同一個代數結構,也不應被強行統一——它們的深刻關聯在於:兩者都是各自領域中「狀態變換」的離散化描述,都通過某種「乘法結構」(quaternion multiplication / attention mechanism)實現變換的合成。

第五維不是空間的延伸,而是一種自由度的質變:從物理的確定性旋轉,到認知的機率性語意轉移。物理 AI 的理論基礎,正是要在 (x,q,τ,ϵ)這個複合空間中,找到使系統能夠在物理與語意兩個層面同時收斂的動力學方程。

主要修訂說明:在原稿優秀洞察的基礎上,補強了四元數的 Hurwitz 定理背景(解釋「為何在此停止」),將第五維的定義從直觀性描述提升為資訊動力學的形式陳述,引入了雙向耦合方程 (q˙,τ˙,ϵ)以使「資訊寄生演化」有可操作的數學形式,並在結語中明確區分「維度延伸」與「自由度躍遷」的概念差異,使論證更為嚴謹。

參考資料

- Hamilton, W. R. (1866). Elements of Quaternions. Longmans, Green & Co.

- Hurwitz, A. (1898). Über die Composition der quadratischen Formen von beliebig vielen Variablen. Nachrichten von der Gesellschaft der Wissenschaften zu Göttingen, 309–316. (可除代數四分類定理的原始來源:ℝ、ℂ、ℍ、𝕆)

- Kuipers, J. B. (1999). Quaternions and Rotation Sequences. Princeton University Press.

- Shoemake, K. (1985). Animating rotation with quaternion curves. ACM SIGGRAPH Computer Graphics, 19(3), 245–254. (四元數在電腦圖學與 3D 旋轉中的奠基性應用)

- Baez, J. C. (2002). The octonions. Bulletin of the American Mathematical Society, 39(2), 145–205. (系統性討論可除代數的結構極限,含八元數失去結合律的證明)

- Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, Ł., & Polosukhin, I. (2017). Attention is all you need. Advances in Neural Information Processing Systems, 30, 5998–6008.

- Devlin, J., Chang, M.-W., Lee, K., & Toutanova, K. (2019). BERT: Pre-training of deep bidirectional transformers for language understanding. Proceedings of NAACL-HLT 2019, 4171–4186.

- Brown, T. B., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., … & Amodei, D. (2020). Language models are few-shot learners. Advances in Neural Information Processing Systems, 33, 1877–1901.

- Friston, K. (2010). The free-energy principle: A unified brain theory? Nature Reviews Neuroscience, 11(2), 127–138. (自由能最小化框架,對應文中 ε(t) drives evolution 的理論根源)

- Clark, A. (2013). Whatever next? Predictive brains, situated agents, and the future of cognitive science. Behavioral and Brain Sciences, 36(3), 181–204. (預測處理理論的系統性綜述,對應「預測 → 誤差修正」認知架構)

- Rao, R. P. N., & Ballard, D. H. (1999). Predictive coding in the visual cortex: A functional interpretation of some extra-classical receptive-field effects. Nature Neuroscience, 2(1), 79–87. (預測編碼的神經科學基礎)

- Pfeifer, R., & Bongard, J. (2006). How the Body Shapes the Way We Think: A New View of Intelligence. MIT Press. (具身認知的理論基礎)

- Siciliano, B., Sciavicco, L., Villani, L., & Oriolo, G. (2009). Robotics: Modelling, Planning and Control. Springer. (機器人學中四元數姿態控制的標準教材)

- Zeng, A., Florence, P., Tompson, J., Welker, S., Chien, J., Attarian, M., … & Lee, J. (2021). Transporter networks: Rearranging the visual world for robotic manipulation. Conference on Robot Learning (CoRL), 726–747.

- Brohan, A., Brown, N., Carbajal, J., Chebotar, Y., Dabis, J., Finn, C., … & Hausman, K. (2023). RT-2: Vision-language-action models transfer web knowledge to robotic control. arXiv preprint, arXiv:2307.15818. (LLM + Robotics 融合的代表性實證研究,直接對應文中 State = (x, q, τ) 框架)

- Shannon, C. E. (1948). A mathematical theory of communication. Bell System Technical Journal, 27(3), 379–423.

- Cover, T. M., & Thomas, J. A. (2006). Elements of Information Theory (2nd ed.). Wiley-Interscience. (語意自由度與資訊熵的形式化基礎)

- Bengio, Y., Courville, A., & Vincent, P. (2013). Representation learning: A review and new perspectives. IEEE Transactions on Pattern Analysis and Machine Intelligence, 35(8), 1798–1828. (embedding 座標系與語意空間的理論根源)

- Bronstein, M. M., Bruna, J., Cohen, T., & Veličković, P. (2021). Geometric deep learning: Grids, groups, graphs, geodesics, and gauges. arXiv preprint, arXiv:2104.13478. (將李群、對稱性與深度學習統一的框架,連接 SO(3) 與神經網路架構)

- Sola, J., Deray, J., & Atchuthan, D. (2018). A micro Lie theory for state estimation in robotics. arXiv preprint, arXiv:1812.01537. (機器人狀態估計中李群的實用數學工具,對應 q(t) obeys physics 的形式化)

發表留言