Open vs. Closed: From the Generative AI Debate to the Future Challenges of Heterogeneous Chip Communication

At an eye-catching panel at Computex 2025 in Taipei, have a discussion about Open vs. Closed AI Debate question: “Who will succeed in the next five years, OpenAI or DeepSeek?" It was pointed out that OpenAI uses a closed system with more efficient internal integration, while DeepSeek adopts an open strategy—though its innovation is impressive, it faces challenges in overall coordination and establishing discipline.

In other words, providing open APIs for external use demands significantly more resources in integration and testing.

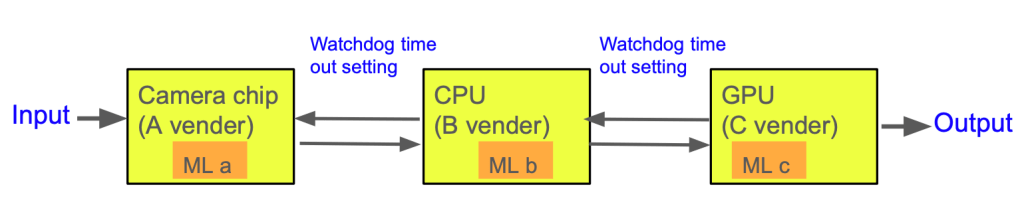

This conversation reminded me of a discussion I had years ago with my boss about the future challenges of AI laptops. At the time, I shared a perspective: If future chips are equipped with AI or machine learning modules, the differences in optimization conditions among them will make communication in multi-chip systems more complex than ever before.

Challenges of Heterogeneous Systems: When Every Chip Has Learning Capabilities

In traditional computer architectures, chips (such as CPUs, GPUs, PCHs, Camera and Audio, etc.) communicate primarily through standardized hardware protocols and firmware-level abstract command controls. But as we move into an era where every chip has self-learning capabilities, such as SoCs with built-in NPUs (Neural Processing Units) or tinyML modules, facing common system demands (like performance optimization, energy efficiency, thermal control), each chip may make different adjustment strategies based on its own training data and model preferences.

What could this lead to?

In a closed system, like Apple or NVIDIA’s integrated platforms, such machine learning logic can be incorporated during the system design stage. By using consistent training data and coordinated system-wide algorithms, they can achieve optimal integration. In contrast, open systems like Android or Linux-based devices, or even X86 and WOA’s windows system integration device use chips from different vendors with significantly varied learning module designs. The difficulty of integration is not just a driver issue, but a logic conflict problem. If AI models cannot operate cooperatively, it could lead to performance degradation or erroneous decision-making.

The Value of Open Systems: Innovation Comes from Diversity, But Requires High Discipline

Of course, the value of open systems cannot be dismissed. On open platforms, innovation often flourishes more easily. The collaborative mechanisms among diverse vendors and open-source communities enable rapid iteration of new technologies, such as the evolution of RISC-V or OpenCL platforms. But such openness relies on one key condition: modular definitions must be clear, and abstract interface layers must be highly disciplined.

This means we need to do a few key things before designing open systems:

- Design clear abstraction layers: Ensure that each chip’s AI model and internal logic can communicate through consistent interfaces (e.g., APIs or Device Trees).

- Establish communication protocols for heterogeneous systems: ARM has begun promoting mechanisms like SMC (Secure Monitor Call) and FF-A (Firmware Framework A), which are key directions for future development.

- Visualization and logical interfacing standards at the AI model level: We may need a new “AI-to-AI communication protocol,” similar in importance to how TCP/IP functions in networking.

Inspiration from Language Models: A Direction for Chip Coordination Tools

Interestingly, within the large language model (LLM) research community, the transparency of learning logic and reasoning paths inside models is becoming more mature. One significant tool is the “Logit Lens“—an observational tool used to analyze the decision-making logic of models, helping researchers understand model behavior.

We can apply this concept to the problem of chip communication. When anomalies occur in heterogeneous chip coordination, could we also develop a “Chip Interaction Lens" to observe inter-chip behavior? This would become an indispensable part of future system design.

Three Key Strategies to Solve AI Model Coordination Among Chips

To enable open systems to function smoothly in the integration of heterogeneous AI chips, the following three strategies will play a critical role:

1. Develop observation tools (like a Logit Lens for chips)

Using hardware trace tools, AI debugging frameworks, and visualization platforms to observe chip behavior patterns is the foundation for identifying logical errors and communication blind spots during system integration.

2. Embed AI-aware debug code into firmware

Future firmware (BIOS, UEFI, EC FW) may need to include logic to monitor the behavior of NPUs or AI models. By integrating customized debug modules and analysis code, potential coordination anomalies can be detected early.

3. Implement firmware version management and comparison mechanisms

This is standard practice in traditional software debugging, but it becomes even more crucial in the AI chip era. Every AI module upgrade or rollback version must have a clear comparison and record mechanism to help identify behavioral differences and algorithm conflicts.

Future Challenges and Vision

As AI functionality becomes embedded in every chip, the discussion will no longer be just about a system’s “computational power"—it will be about how multiple intelligent agents within the system communicate and form consensus.

Open systems will be more innovative but also more fragile. Closed systems may be more efficient to integrate, but could limit diverse development. What we need in the future is a “semi-closed collaborative system" that preserves core integration within openness and seeks standardization through coordination.

We are standing at the threshold of a new era—the language of AI chips is being invented, and understanding how they converse will be the key mission of the next decade.

開放 vs 封閉:從生成式 AI 論戰談到異質晶片溝通的未來挑戰

在 2025 台北國際電腦展(Computex 2025)的一場引人注目的座談會中,針對一個關於「OpenAI 與 DeepSeek 誰在未來五年內會成功」的討論中,給出了一個發人深省的回答。回答中指出,OpenAI 採用的是封閉系統,其內部整合效率更高;而 DeepSeek 採取開放策略,儘管創新力驚人,但在整體協調與紀律的建立會是挑戰。

我想或許是在提供開放 API 給外部使用時,更需耗費大量的整合與測試資源。

這番對話令我想起多年前我與老闆討論 AI 筆電未來挑戰時的情境。當時我便提出一個觀點:未來的晶片若內建 AI 或機械學習模組,各自運作優化條件之間的差異,將使多晶片系統溝通變得前所未有地複雜。

異質系統的挑戰:當晶片都具備自我學習能力

傳統電腦架構中的晶片(如 CPU、GPU、PCH 、Camera and Audio 等)彼此溝通,多半依靠標準化的硬體協定與韌體層抽象指令控制。但當我們走入一個「每顆晶片都有自學能力」的時代,例如內建 NPU(神經網路處理器)或 tinyML 模組的 SoC,在面對同樣的系統需求(如效能優化、能源效率、熱控制)時,每顆晶片可能會依據自己模型訓練的偏好與資料,做出不同的調整策略。

這會導致什麼現象?

在一個封閉系統中,例如蘋果或 NVIDIA 的整合平台,這些機器學習邏輯可以事先在系統設計階段就納入整體考量,藉由一致性的訓練資料與整體演算法協調來達到最佳整合效益。反之,在開放系統中,如 Android 或 Linux-based 的裝置,甚至是X86 與WOA 的windowa 系統整合裝置,由於晶片來自不同廠商、學習模組設計差異大,整合的難度不只是驅動問題,而是邏輯衝突問題,AI 模型之間若無法協同運作,甚至會導致效能退化或錯誤決策。

開放系統的價值:創新來自多元協同,但需要高超紀律

當然,開放系統的價值不可抹滅。在開放平台中,創新通常更容易被催生出來,多元供應商與開源社群的協作機制能快速迭代新技術,例如 RISC-V 或 OpenCL 平台的演進。但這種開放的前提是:模組化的定義必須夠清楚,抽象層的對接需高度紀律化。

這就需要我們在設計開放系統前先做幾件關鍵事情:

- 設計清楚的中介抽象層(Abstraction Layer):確保每顆晶片的 AI 模型與內部邏輯,可以透過一致的介面(API 或 Device Tree)與外部協調。

- 制定異質系統之間的溝通規範(Inter-Processor Communication Protocols):這方面 Arm 已開始推動 SMC(Secure Monitor Call)與 FF-A(Firmware Framework A)等機制,這是未來發展方向。

- AI 模型層的可視化與邏輯對接規範:未來可能需要一種「AI to AI 溝通標準」協定,就如同 TCP/IP 在網路中的地位。

從語言模型啟發晶片協調工具的開發方向

有趣的是,在大型語言模型(LLM)研究社群中,對模型內部的學習邏輯與推理路徑透明化的研究已逐漸成熟。其中一個重要工具是「Logit Lens」,這是一種用來解析模型決策邏輯的觀察工具,能協助研究者理解模型的行為表現。

我們可以類比這種思維到晶片溝通問題上。當異質晶片間的協調出現異常時,我們是否也能開發出類似「**晶片間行為觀測鏡頭(Chip Interaction Lens)」的工具?這將成為未來系統設計不可或缺的一環。

解決晶片間 AI 模型協調問題的三大策略

為了讓開放系統在異質 AI 晶片的整合上也能順利運作,以下三個策略將扮演關鍵角色:

1. 開發觀察工具(如 logit lens 類的晶片行為分析器)

透過硬體 Trace 工具、AI 調試框架與視覺化平台來觀察晶片的行為模式,是系統整合時找出錯誤邏輯、溝通死角的基礎。

2. 在韌體中嵌入 AI-aware debug code

未來韌體(BIOS、UEFI、EC FW)中可能需要內建對 NPU 或 AI 模型的行為監控邏輯。透過加入自訂的 debug 模組與分析程式碼,能及早察覺潛在協調異常。

3. 韌體版本管理與比較機制

這是傳統軟體 debug 的標準做法,但在 AI 晶片時代變得更重要。對每個 AI 模組的升級與回溯版本需有清楚比對與紀錄機制,才能協助系統找出不同行為背後的模型變異與演算法衝突。

未來的挑戰與願景

隨著 AI 功能深入到每顆晶片內部,我們將不再只是討論系統的「運算能力」,而是討論整體系統中「多智能體之間的溝通與共識形成」。

開放系統會更創新,但也更脆弱;封閉系統雖整合有效,但可能限制多元發展。未來我們需要的是一種「半封閉的協作系統」,在開放中保留核心整合,在協同中尋求規範化。

我們正站在一個新時代的門口——AI 晶片的語言正在被發明,理解它們如何對話,是下一個十年的關鍵任務。

發表留言