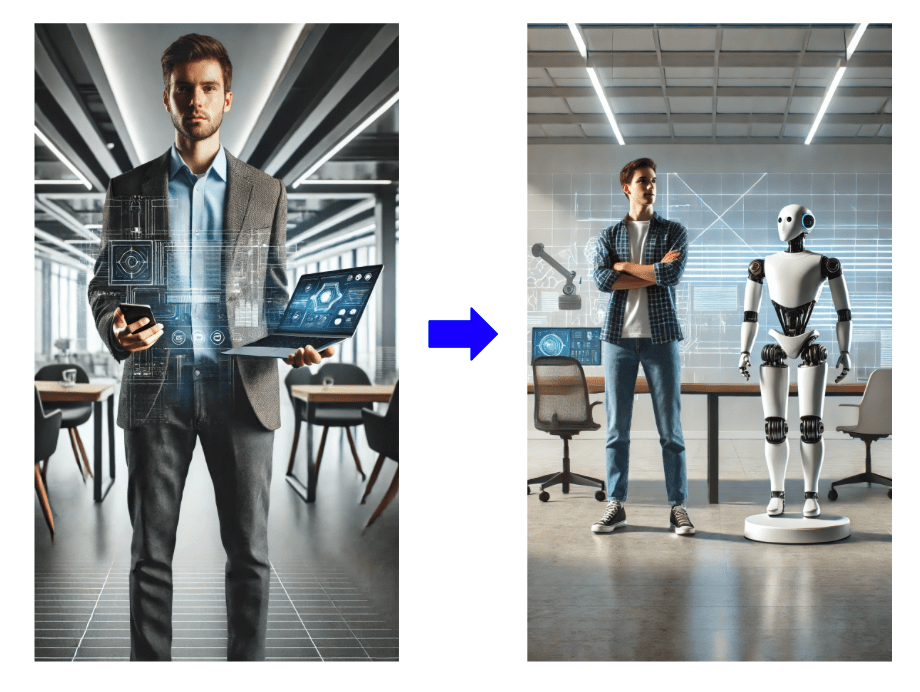

In the discussion of the five key indicators for humanoid robot development—Bandwidth, Latency, Economics, Reliability, and Privacy—this post focuses on the final, yet equally critical, indicator: Privacy.

Much like in human society, privacy is a vital measure of individual quality of life, but it often comes at the expense of efficiency. In the development of next-generation AI PC devices, the interplay between CPU and OS has introduced numerous challenges, particularly in addressing privacy concerns. Solving these issues requires significant time and R&D resources, making privacy and power savings two of the most complex areas in notebook development, as I discussed in my previous post.

In the realm of AI, we typically differentiate development into three layers based on computing power:

- Training large language models (LLMs).

- Fine-tuning

- Inference and applications.

Among these, edge computing—which operates outside centralized cloud servers—provides better privacy control for Fine-tuning, Inference and applications. For humanoid robots, I believe it is essential to develop an independent AI system that does not rely on constant cloud connectivity. This “artificial brain” would shield users and their families from malicious intrusions and preserve their privacy. While current mobile computing solutions may not yet provide a perfect answer, the continuous advancements in computational speed make such solutions inevitable in the near future. However, to safeguard privacy effectively, we must establish an abstracted architecture for edge computing akin to UEFI or ACPI.

Such a framework would ensure the seamless integration of security measures to protect user privacy, avoiding the issues faced by some current AI PC CPU architectures, which struggle to retroactively implement security enhancements. In conclusion, edge computing is the best solution to address privacy concerns in humanoid robots. To make edge computing effective, we need an abstract interface that fosters the co-evolution of hardware and software ecosystems. This will allow the system to adapt to increasingly sophisticated malware and data theft attempts, ensuring a secure and private user experience. Depend on PC development experiences, I believes that no single company can solve the challenges of robotics technology on its own. This is why I strongly emphasize the importance of establishing a consensus on the design of abstract interface layers as a foundational element.

隱私 — 事物總有兩面:人形機器人發展的關鍵成功指標

在探討人形機器人發展的五大關鍵指標——頻寬、延遲、經濟性、可靠性與隱私性——本篇文章將聚焦於最後一項、但同樣至關重要的指標:隱私性。

就如同在人類社會中,隱私是一種衡量個人生活品質的重要指標,但往往也是以犧牲效率為代價。在次世代 AI PC 裝置的開發過程中,CPU 與作業系統之間的相互作用,已經揭示了隱私議題帶來的諸多挑戰。解決這些問題需要大量的時間與研發資源,因此,隱私與節能往往成為筆電平台開發中最複雜、最困難的領域之一,這也是我在前一篇文章中所提到的重點。

在 AI 的發展中,通常可依據運算資源的需求,分為三個層次:

- 大型語言模型(LLMs)的訓練

- 模型微調(Fine-tuning)

- 推論與應用(Inference & Applications)

其中,「邊緣運算」(Edge Computing)——也就是不依賴集中式雲端伺服器的本地運算方式——對於微調與推論應用來說,能提供更好的隱私控管能力。

對我而言,人形機器人必須具備一套獨立 AI 系統,也就是所謂的「人工大腦」,無需長時間連線至雲端。這樣的設計能有效保護使用者與其家庭免受惡意入侵,確保隱私安全。雖然目前的行動運算技術尚未能完全實現這個目標,但隨著運算效能持續提升,我相信這樣的系統在不久的將來將成為現實。

然而,要真正落實隱私保障,我們需要建立一套類似 UEFI 或 ACPI 的抽象化架構標準,用以規範邊緣運算平台的運作。

這樣的架構能夠將安全措施無縫整合進系統中,避免目前某些 AI PC 架構所面臨的問題——在開發後期才被迫補上安全性機制,導致系統難以維護且漏洞百出。

總結而言,邊緣運算是解決人形機器人隱私問題的最佳解方。然而,要使邊緣運算發揮最大效益,必須有一個抽象的界面層,能夠促進軟硬體生態系的共同演進。這樣的架構可以讓系統具備因應日益複雜惡意程式與資料竊取攻擊的能力,從而提供安全、私密的使用體驗。

憑藉我在 PC 平台發展上的實務經驗,我深信:人形機器人技術的挑戰,絕非單一企業能夠獨自解決。因此,我特別強調——建立一套業界共識的抽象界面設計,是推動整個產業發展的基礎工程。

發表留言